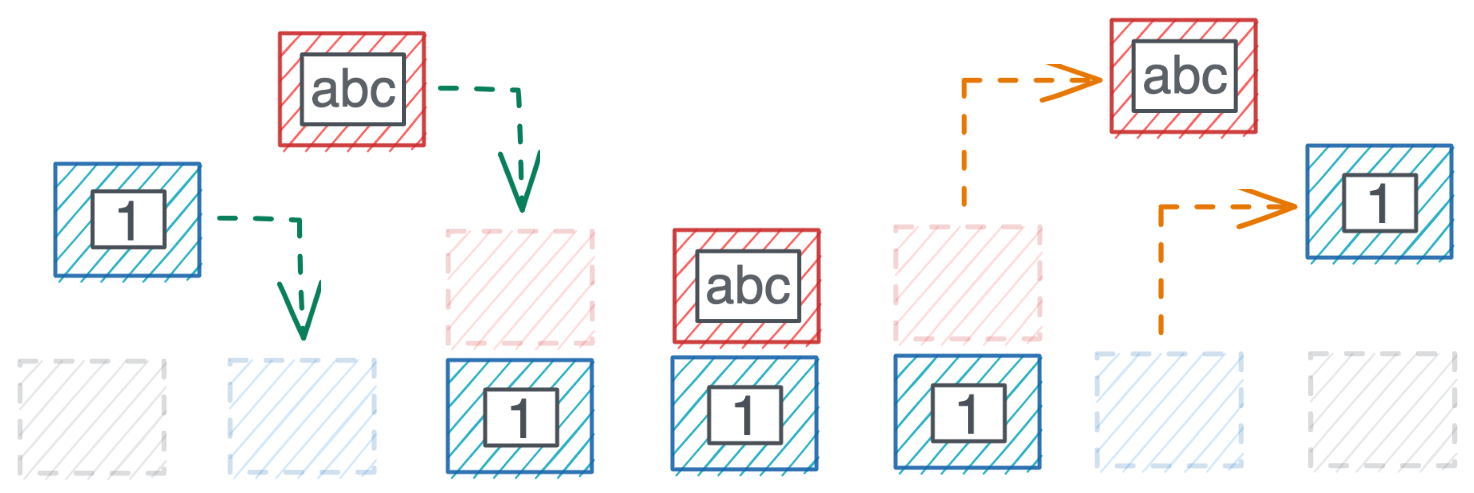

Stacks are also a natural fit for dealing with intermediate values when evaluating (arithmetic) expressions. Queue implementations such as ring buffers are much more complicated (requiring a separate pointer for start/end and special support for wrapping around when the end of the allocated capacity is reached). It might make implementing a real optimizing compiler substantially easier, so long as one is willing to program in terms of an implicit queue.Ĭould you give an example of queue-based processing?Ī really fundamental advantage that stacks have is that they are trivial to implement efficiently – you just need to increment/decrement a pointer. I just think for my particular low level problem domain, this may be of much more than academic interest.

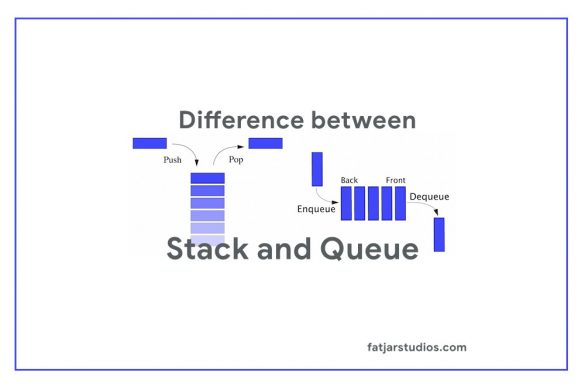

and that nevertheless almost nobody designs with or considers again. I feel like I might be missing some basic computer science term somehow, that people know about. I have not really seen anything about a FIFO queue being the fundamental unit of computation. I've tried to do a fair amount of due diligence on the subject, but the internet mostly talks about stack architectures and register architectures. I'm wondering if any other programming language designs have noticed this and sought this clarification, particularly at the machine instruction level. I have found that when trying to schedule low level assembly code, operations upon FIFO queues produce natural orderings of pipelined instructions whereas LIFO stacks produce complications and convolutions. For processing operations, consider concatenative languages such as Forth, whose operands are on a stack. Can anyone give examples of programming languages that are based on the use of queues to call functions and process operations, not stacks? Like there is no program stack, there is a program queue.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed